How to Compare ML Experiment Tracking Tools to Fit Your Data Science Workflow

- Guy Smoilovsky

- 10 min read

- 5 years ago

Co-Founder & CTO @ DAGsHub

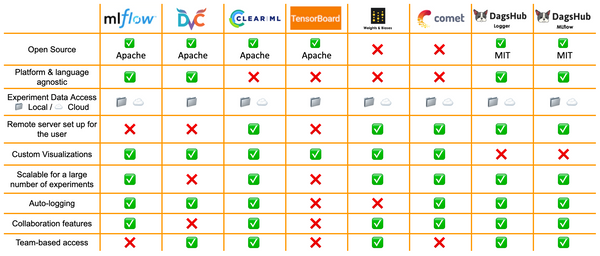

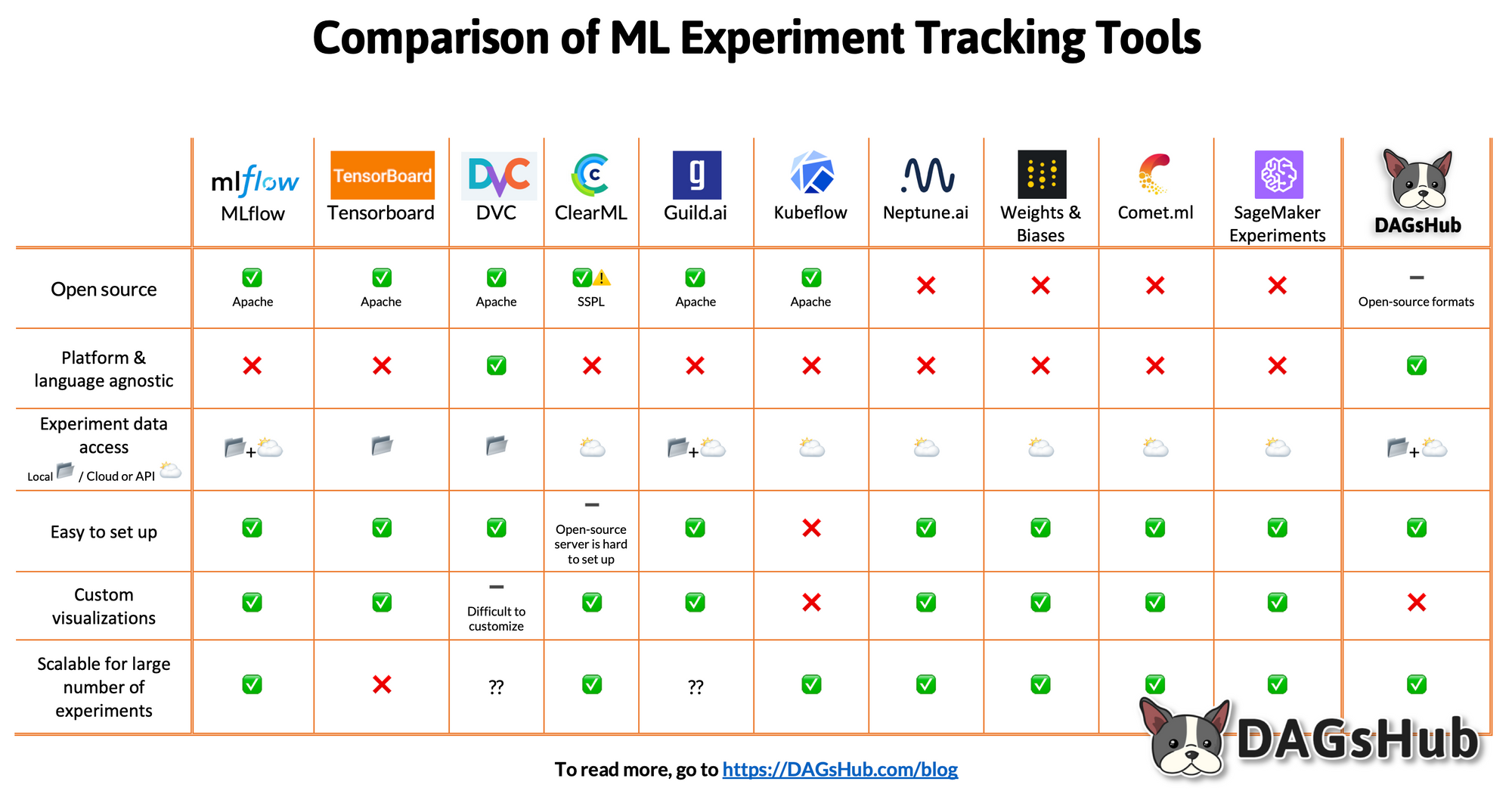

TL;DR: There are many ML experiment tracking tools that can support different data science workflows. We offer a way to compare alternatives and make an informed choice. We highlight some of the most popular ML experiment tracking tools, including:

- What factors to use to compare tools

- The types of solutions available

- A comparison of the pros and cons of each tool

- Provide a useful summary table to share

The rise of experiment tracking in the data science workflow

There is an ever-expanding number of tools to track experiments. Research shows that when we are confronted with a large number of (good) options we tend to get overwhelmed. Choice overload is a real thing. Imagine walking into a supermarket wanting to buy a package of cookies. In the cookie aisle, there are fudge-covered, fat-free, double stuffed, mint-flavored - and those are only the Oreos! The simple task of buying cookies can become paralyzing.

Similarly, the confounding array of options to track experiments can lead to suboptimal decisions. Choice Overload makes it harder to make decisions, and we often end up procrastinating and pushing off decisions and even worse we are less satisfied with the choices we do make.

How to choose the best ML experiment tracking tool

If you are running experiments as part of your data science workflow - you already know how important it is. The iterative nature of experiment tracking requires that it be done in a structured fashion. Even for simpler projects, without proper tools, you'll quickly get stuck with a mess.

The key question is which of the numerous options out there is best suited for you and your data science workflow. There are a lot of them, much like the cookies described above. We’ll start by covering some of the different factors and considerations when choosing between experiment tracking tools and then we’ll outline the pros and cons of each solution.

What factors to consider?

Based on our analysis we suggest comparing experiment tracking tools based on six key variables. You can also watch the video below on these 6 points.

1. What will I be tracking

Many different factors have to be carefully collected, tracked and saved to get the same code and model to work again. Changing one input can lead to different results.

A non-exhaustive list of factors to track in your experiments might look something like this:

- Hyperparameters

- Models

- Code files

- Metrics

- Environment

Additionally, for some projects, you might track yet more information such as model weights, prediction distributions, model checkpoints, and hardware resources.

It’s important to check and make sure that the platform you choose covers and tracks all the different elements your data science workflow requires. This is arguably the most acute challenge in experiment tracking, namely saving all the data required and not missing important information.

2. Where is my data being saved

Different tools and platforms save data differently. Some are based on external servers and some are based on local folders with unstructured text files. The more automatic the better; the more manual logging required the more likely something will be forgotten or go wrong.

3. Visualizations

A key element to different tracking tools is their ability to represent the different experiments visually. A good visual representation will enable you to analyze and interpret results quicker. Additionally, the right graph can help communicate results to others, especially to stakeholders with a non-technical background. Visualization is an extremely effective way to show complex data concisely and clearly. Some tools offer great visual tools and capabilities, others - not so much.

4. Ease of Use

Convenience is an important factor that shouldn’t be overlooked. A certain tool might be really powerful and track every single piece of data but it might be a nightmare to use and overkill for any given data science workflow. Another part of this is the UI/UX, some people might place a premium on customizability while others might prioritize a clean and elegant interface.

5. Stability

Some tracking tools are designed to support huge enterprises that require stable and mature solutions that can be depended on. Other tools are newer and might offer more advanced features, but are potentially less stable than some of the established solutions. Different users might balance the amount of risk/reward they are willing to entertain.

6. Scale

The experiment tracking needs of a single user can’t be compared to those of a huge team working collaboratively with multiple data science workflows. A team might need all of their data to be stored in a single repository that is accessible to the whole team. Thus, everyone has one source of information and members of the team can see what others are working on and experimenting with. On the other hand, an individual working alone might not need these features and might prefer to store all the data locally.

What tool fits best in your data science workflow?

There are a lot of tools out there to help track experimentation with different features and approaches. Broadly speaking, you can focus on one of three main options.

1. Open Source

- Advantages: Free, customizable, support from community, open standards, avoids lock-In.

- Disadvantages: Hard to “scale”. This can mean challenges sharing work as a team or long-term projects, usability concerns, lack of expert support.

2. Commercial

- Advantages: Good UI + UX, ease of use, stability, tailored support.

- Disadvantages: Pricey, limited customization, may lead to lock-in and dependency.

3. Platform-Specific

- Advantages: Integrates well with the platform, simple.

- Disadvantages: Only works well with the matching platform.

This is a great example of the tradeoffs between commercial-proprietary solutions and open-source. This tradeoff is deeper than just one being free and the other not. Oftentimes the crux of this tradeoff is flexibility versus usability. Open-source software offers users more flexibility and customization for their data science workflow but requires greater effort to use. Commercial solutions offer less flexibility but are generally easier to use off the bat. You can read more about this here and here.

While examining the specific tools below, we recommend keeping in mind the main differences between the three main categories outlined above.

Reviewing ML experiment tracking tools

Open Source

MLflow

Description: MLflow is an open-source platform that enables you to manage the entire ML lifecycle. Specifically, MLflow Tracking is an API and UI that allows you to log a model’s parameters, metrics, and even the model itself along with various other things during the model training/creation process. MLflow Tracking can be used in any environment and can log results both to local files or to a server. The UI can be used to view and compare results from numerous runs and different users.

Advantages:

- As an open-source project - MLflow is highly customizable and can be made to fit your data science workflow.

- Built to work with any ML library, algorithm, deployment tool, or language.

- Easy to add MLflow to existing ML code.

- Has a very large and active community behind it and is very widely adopted in the industry.

Cautions:

- Limited access controls and support for multiple projects compared to other solutions.

- UPDATE: You can now get easy access controls for MLflow using DAGsHub's new integration!

- The UI of MLflow could be improved and visualizations are more limited. This can make sharing information with non-technical stakeholders challenging.

TensorBoard

Description: Tensorboard is Tensorflow’s visualization and tracking toolkit. Tensorboard is open-source and widely integrated with other tools and applications.

Advantages:

- A large library of pre-built tracking tools and easily integrates with many other platforms.

- Good visualizations help enable good information sharing.

- A large community that creates robust community support and problem-solving.

Cautions:

- Some may find it complex to use with a long learning curve.

- It may not scale well with large amounts of experiments. Slows down when using to view and track large-scale experimentation.

- Designed for single-user, local machine usage, not team usage.

DVC

Description: DVC (Data Version Control) is an MLOps tool for data versioning and pipeline management. DVC is a free, open-source tool, and platform agnostic. DVC is easy to use and doesn’t require special infrastructure or external services. DVC isn’t focused on experiment tracking but does have some tracking features (For more information, check out our blog on data versioning tools).

Advantages:

- Easily adaptable to work with all languages and frameworks.

- Ability to version and process large amounts of data.

- Easy to use and customizable data science workflow.

Cautions:

- The tool dedicated to experiment tracking is relatively new and experimental. So it may need to mature.

- Experiment tracking is somewhat tied with DVC data versioning and pipeline tools which you might prefer not to use.

ClearML

Description: ClearML is an open-source platform that provides ML researchers with the tools to manage the entire ML lifecycle. ClearML is customizable and integrates with whatever tools a team is already using.

Advantages:

- Easy to add auto-logging into many libraries.

- Customizable UI that enables users to sort models by different metrics.

Cautions:

- Due to a large number of modifications that need to be run for auto-logging (ClearML replaces many built-in functions of other frameworks), the system may be comparatively fragile.

- Installing the open-source version on your servers is relatively complicated (compared to MLflow)

Guild AI

Description: Guild AI is an open-source experiment tracking system for machine learning. Guild AI is very light-weight and external and doesn’t require any changes to your code. Guild doesn’t require any additional software or systems such as databases or containers.

Advantages:

- All results are saved on a locally mounted file system and rely on external configuration. Thus you don’t require installation and maintenance costs.

- This also helps you avoid being locked-in.

- Designed to start quickly and easily and can be configured to any aspects required by the user and their data science workflow.

Cautions:

- It may be difficult to work collaboratively as a team on projects, as the platform is geared towards single users.

- Alternatives may be considered to have better UI/UX.

- Visualizations are pretty basic.

Kubeflow

Description: Kubeflow is the machine learning toolkit for Kubernetes. It is an open-source framework based on the way Google runs TensorFlow internally. Kubeflow is powerful and offers very detailed and accurate tracking. Kubeflow isn’t focused on experiment tracking but does have some tracking features.

Advantages:

- Kubeflow is a good fit for Kubernetes users.

- Highly scalable and offers great hyperparameter tuning.

Cautions:

- Requires adapters to operate and maintain the Kubernetes cluster.

- Assumes a high degree of competency with Kubernetes.

- Kubeflow is a tool for advanced teams of ML engineers but may be challenging and unintuitive for others.

- May have more limited features around experiment tracking compared to other solutions.

Commercial Solutions

The main consideration for commercial solutions is around ease of use, price, and more advanced user interfaces.

Neptune

Description: Neptune is a lightweight experiment tracking tool. Neptune is built for research and production teams and large-scale operations. Neptune enables you to see and debug experiments live as they run.

Advantages:

- Flexible and works well with other frameworks.

- Good platform for sharing with a team and collaborating on projects. Visualizations enable sharing information with managers and non-technical stakeholders.

- Convenient and easy-to-use UI/UX.

Cautions:

- As a commercial platform, Neptune may offer less customization than open-source alternatives.

- Paid solutions may be expensive for some.

Weights and Biases

Description: Weight and Biases is a powerful experiment tracking tool that tracks and logs all the information you need for your projects. Weights and Biases allows you to track, compare and visualize ML experiments. It easily integrates with many popular libraries.

Advantages:

- Intuitive and customizable UI allows for teams to visualize and organize their data science workflow.

- Easy platform and sharing features enable you to work collaboratively on projects.

- Easily integrates with other platforms and tools.

Cautions:

- As a commercial platform, Weight and Biases may offer less customization than open-source alternatives.

- Paid solutions may be expensive for some.

Comet

Description: Comet is a self-hosted and cloud-based ML platform that offers experiment tracking. Comet is lightweight and platform agnostic.

Advantages:

- Has great features for sharing work and team data science workflows.

- Integrates well with other ML libraries and platforms.

- Offers real-time metrics and charts of the experiments being run.

Cautions:

- May lack some features for automatic logging.

- The free tier offers only one free private project.

Platform-Specific

Amazon SageMaker

Description: Amazon Sagemaker is a set of tools that cover a broad set of capabilities for the entire ML pipeline. Specifically, Amazon SageMaker Experiments is the component that tracks experiments

Advantages:

- Integrates seamlessly with those who use AWS.

- Very clean and easy to use interface.

- Easy to use and convenient for those using AWS.

Cautions:

- For those who don’t use AWS, Sagemaker may integrate poorly with other frameworks.

- Requires some special infrastructure and depends on APIs.

DAGsHub (that’s us!)

Description: DAGsHub is a platform for data science version control, teamwork, review, and open source collaboration. Naturally, one of the features required to achieve these goals is experiment tracking.

Advantages:

- Relies on simple, transparent, and open formats. This means an auto-logging library exists, but it’s easy to adapt to any language/framework and export with a simple Git push.

- Integrated with Git – experiments are automatically reproducible and connected to their code, data, pipelines, and models.

- Teams get a central location to visualize, compare, and review their experiments, without needing to set up any infrastructure.

- Detects and supports DVC’s metrics and params file formats.

- Free

- UPDATE: Now also supporting MLflow experiments.

Disadvantages:

- Auto-logging capabilities are still relatively limited, supporting only pytorch lightning and fast.ai v2

- No way to create advanced or custom visualizations yet and the available visualizations include numbers, bar charts, line charts, and parallel coordinate plots.

Summary – Optimizing experiment tracking for your data science workflow

Tracking your experiments in an efficient and organized manner is crucial. Having to try and recreate a model from a couple of months ago that holds an important result for your project is a frustrating situation that can be avoided with some foresight. Having so many options of different experiment management tools and platforms that offer experiment tracking can be more a hindrance than a help. It can be hard to tell the difference between them and can keep us from making a decision and picking one. We hope that this post will help you to discover different experiment tracking tools and pick the one that fits your data science workflow best.