A prompt is any input or query provided to an AI (Artificial Intelligence) model, specifically LLMs (Large Language Models), designed to generate a specific response or action. Prompt management ensures that these inputs are structured effectively to maximize the accuracy and relevance of the LLM-generated output.

What is Prompt Management?

Prompt management involves the orchestration of prompts from their creation to their fine-tuning and usage. It includes defining prompt templates, refining input phrasing, evaluating model responses, and continuously improving the quality of these prompts. The goal is to ensure that AI models generate responses that align with the user’s expectations or the system’s requirements. In practice, this can include selecting the right format, length, and content for prompts while considering contextual factors like tone, specificity, and clarity.

Managing prompts becomes particularly important when dealing with complex AI systems, as different types of prompts can lead to varied model behaviors. By continuously refining the way prompts are structured, AI systems can be trained to provide more relevant, context-aware, and accurate outputs.

In AI/ML models, particularly LLMs, prompts serve as the gateway through which the models receive input and generate responses. These models rely on prompts to understand what task is being asked of them and to interpret the underlying intent of the user or system initiating the interaction. As models become more powerful, prompt management is becoming one of the most important tools for controlling AI behavior and improving the alignment between model outputs and user expectations. Here are some types of prompts :

User-input prompts

These are the most common prompts, provided by users interacting with an AI system. They are usually direct queries or commands that a user gives to the model. For example, “What is the capital of India?” or “Generate a blog post about LLMs.”

System-generated prompts

In certain AI applications, especially in environments involving automation or large-scale data processing, the system itself may generate prompts to trigger further actions. These are often predefined or dynamically generated by the system, based on certain rules or conditions. For instance, in a chatbot application, a system-generated prompt might ask, “Do you need help with anything else?” based on the previous user interaction.

Why Is Prompt Management Important?

In the rapidly evolving world of artificial intelligence (AI), prompt management plays a critical role in ensuring the performance and effectiveness of AI models, especially those designed for natural language processing (NLP). Whether it’s in chatbots, virtual assistants, or complex content generation systems, the way prompts (input queries or instructions) are managed can have a profound impact on the accuracy, efficiency, and overall quality of AI-generated outputs. Effective prompt management also becomes more challenging—and more essential—when scaling for large models that handle vast amounts of data and complex tasks.

Let’s explore why prompt management is so crucial by focusing on some key areas:

Ensuring Accuracy and Efficiency in Responses

Proper prompt management ensures that input queries are well-formed, relevant, and direct, reducing the chances of misinterpretation by the AI. This is particularly important for tasks that require precise outputs, such as legal document generation, medical diagnostics, or financial analysis. Moreover, efficient prompt management reduces the cognitive load on both users and systems. By guiding users to formulate clearer, more concise prompts, the AI can respond faster and with greater accuracy, enhancing the overall interaction experience. This efficiency becomes even more vital in real-time applications, where lag or inaccurate responses can lead to frustration or operational inefficiencies.

Preventing Ambiguity in Input Prompts

Ambiguity is a common challenge in human language, and it can be especially problematic in AI interactions. Poorly managed prompts often contain ambiguous phrasing or unclear instructions, which can lead to AI generating incorrect or irrelevant responses.

Prompt management aims to minimize such ambiguities by guiding users toward more specific, well-defined inputs. This can be achieved through prompt validation mechanisms, suggesting refinements in real-time, or even providing examples of optimal queries.

Enhancing the Quality of AI-Generated Outputs

The quality of AI-generated content is directly linked to the clarity and precision of the input prompt. A well-managed prompt serves as a clear roadmap for the AI, ensuring that it understands the context, tone, and desired outcome of the task at hand. For example, when generating creative content, such as articles or marketing copy, the prompt needs to convey the appropriate level of detail, target audience, and stylistic preferences.

Effective prompt management can significantly enhance the richness, creativity, and relevance of AI outputs. Tools that assist in prompt crafting—like pre-built templates, customization options, and keyword guidance—can help users fine-tune their input, leading to higher-quality results.

Scaling Prompt Usage for Large Models

As AI models grow in size and complexity, the need for robust prompt management becomes even more critical. Large models are capable of handling intricate tasks and generating highly nuanced outputs. However, with this increased capability comes a greater need for managing how prompts are structured and utilized.

Scaling prompt usage involves creating systems that can handle a high volume of diverse prompts while maintaining response quality and consistency. This requires implementing automated tools for prompt refinement, validation, and optimization. Effective prompt management in these contexts ensures that the AI can quickly and accurately interpret each query, delivering reliable results without overwhelming the system.

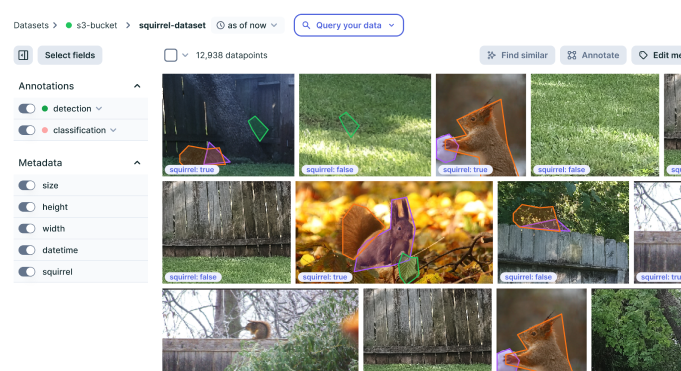

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform

Key Component of Prompt Management

Prompt management is a critical aspect of building efficient AI systems, especially those involving natural language models. Here are some areas in which we analyze the key components of Prompt Management:

Prompt Design and Optimization

Prompt design is the foundation of any successful AI interaction. Crafting prompts involves determining the right balance between clarity, specificity, and flexibility. A well-designed prompt guides the AI toward desired outcomes by specifying intent without restricting creativity or exploration. Prompt optimization focuses on refining these inputs based on user feedback, system performance, and ongoing experimentation. This process often involves A/B testing, adjusting variables such as tone, context, and length to create prompts that are precise, effective, and adaptable across various scenarios. In essence, prompt design and optimization ensure that the prompts not only generate accurate results but also align with user expectations and application goals.

Prompt Storage and Retrieval Systems

Efficient prompt management requires robust systems for storing and retrieving prompts. A centralized prompt storage system allows easy access to predefined prompts, ensuring consistency across multiple use cases or applications. These systems often utilize databases or version-controlled repositories to manage prompt history, categories, metadata, and even different prompt variations. These systems play a crucial role in managing complex applications, ensuring that prompts are scalable, reusable, and easily accessible when needed.

Monitoring and Evaluation of Prompt Effectiveness

Monitoring and evaluating the effectiveness of prompts is an ongoing process in prompt management. Once a prompt is used, its performance must be tracked to assess its impact on user experience and system outcomes. Key metrics include user engagement, response accuracy, and error rates. This data helps identify patterns in prompt performance, allowing developers to refine prompts and improve system behavior over time. Evaluation methods can range from qualitative feedback to quantitative analysis, such as tracking click-through rates or measuring task completion. By closely monitoring the outcomes of each prompt, organizations can ensure their AI systems remain effective, adaptive, and aligned with user needs.

Handling Prompt Context and Memory

Context and memory are crucial components of prompt management, particularly for dynamic, multi-turn interactions. Handling context means that prompts must be designed to maintain coherence across multiple exchanges with the user. The system should be able to recall previous interactions and relevant information to keep conversations fluid and relevant. Memory systems allow AI to retain information from previous prompts, making it easier to manage long conversations or complex queries that require continuity. By effectively managing context and memory, prompt systems can deliver more personalized and meaningful responses, ensuring that interactions feel natural and tailored to individual users.

Best Practices for Effective Prompt Management

Effective prompt management is essential for generating accurate and relevant responses from AI models.

Developing Clear and Precise Prompts

A well-structured prompt should eliminate ambiguity, guiding the AI to deliver the desired result. This involves using concise language and focusing on specific goals, which reduces the risk of off-topic or misleading outputs.

Leveraging Contextual Prompts for Better Results

Leveraging contextual prompts can further enhance effectiveness, as these prompts incorporate prior interactions, ensuring the AI tailors its responses based on ongoing conversations or tasks. The ability to weave relevant context into prompts can dramatically improve the quality of the generated outcomes, as the AI becomes more attuned to the user’s intentions and needs.

Iterative Testing and Refinement of Prompts

Iterative testing and refinement of prompts are crucial for optimizing performance. By testing prompts in various scenarios and adjusting them based on the results, users can identify the most effective structures and patterns. This process also helps in eliminating inefficiencies or biases in the AI’s responses.

Using Automation Tools for Large-Scale Prompt Management

Automation tools like LangChain, LlamaIndex, PromptLayer, etc. are invaluable for large-scale prompt management. These tools can track prompt performance, streamline the revision process, and ensure consistency across numerous applications, making it easier to manage a high volume of interactions without compromising quality. By applying these practices, users can maintain control over AI responses, ensuring they align with their objectives.

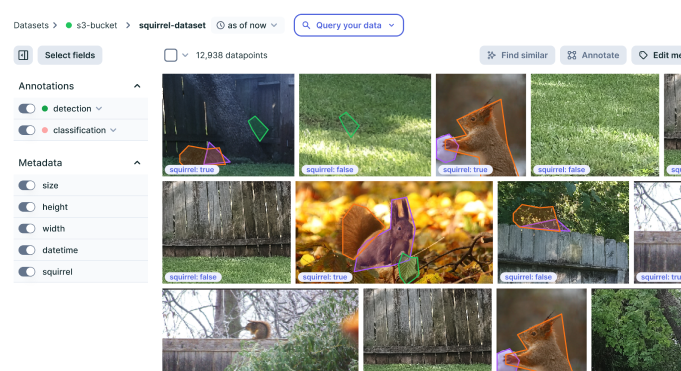

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform