In machine learning, a baseline model is a simple predictive model that helps to set an initial comparison point for assessing the performance of more complex models. It acts like a foundation or basic gauge so you can see if your advanced models are truly better.

What are Baseline Models?

Baseline models have been around since the early days of statistical analysis and machine learning. Initially, they were used to validate whether new techniques were genuine improvements. As the field progressed, these models became essential for model validation and comparison, ensuring that the complexities of advanced models were actually worthwhile.

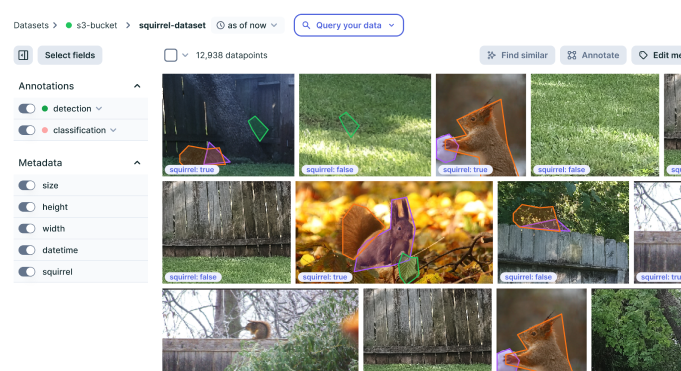

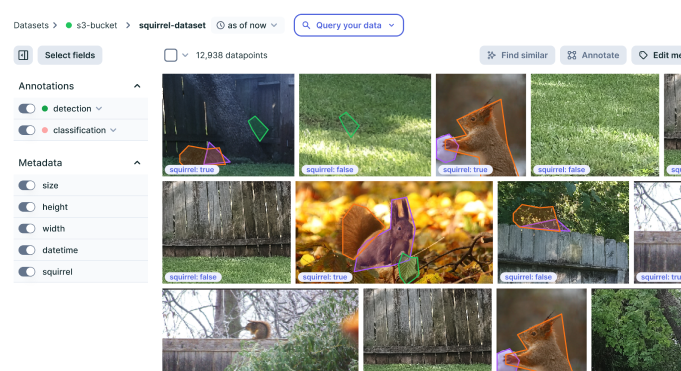

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform

Baseline models are important in predictive analytics and machine learning because they act as a starting point. They offer an easy, sometimes very basic way to measure how well the data set performs before using more complicated methods. The main purpose of a baseline model is to create a simple reference point for comparison. This helps to check if any new, more advanced models show big improvements compared to this basic one, making sure their extra complexity and computational cost are worth it. A basic mechanism of the baseline model involves:

- Simplicity: A basic model is usually simple and easy to understand. It uses clear methods that are not hard to set up and do not need a lot of computer power or complex data preparation.

- Performance Metric Establishment: It makes a minimum performance standard. Any new model developed after must perform better than the baseline to be seen as effective.

- Validation: This acts as a way to check if things are making sense. If the complicated model does worse or only as good as the simple one, this might show problems such as overfitting, wrong guesses in our model, or issues with data quality.

Examples of Simple Baseline Models

- Mean or Median Prediction Model: In regression tasks, using the mean (average) or median of the target variable as a prediction for all instances can give us a simple baseline. For example, when forecasting sales, we might predict that future monthly sales will be equal to the average sales in past months. This gives us an easy starting point to compare more complex models.

- Mode Prediction Model: In classification tasks, to start with a simple baseline, we can predict the class that appears most often (mode) for all cases. For example, if in our dataset 90% of emails are non-spam, then this basic model would assume every email is non-spam.

- Random Model: This model predicts outputs in a random way, but it considers the distribution of classes found in the dataset. It is good for showing what performance looks like at its lowest level, especially when dealing with classification problems.

- Historical Average Model: This model is used in time series forecasting to predict future values by looking at past averages. For instance, it might estimate the temperature for the next month by taking the average temperatures from that same month in earlier years.

- Simple Linear Regression: When you have data where variables show a linear relationship, using simple linear regression can help. This method uses one or more independent variables to predict the dependent variable and is often used as a starting point in analysis.

The Importance of Baseline Models

Let’s have a look at the reasons why baseline models are important in machine learning.

Benchmarking Tool

Baseline models serve as a standard or reference point. They create the basic performance level that any advanced model should exceed, setting clear goals and expectations for improvement. This is especially helpful for planning projects and setting realistic goals to make the model better.

Model Evaluation

Baseline models give an easy method to check if new models work well. If a new, complicated model does not perform better than a simple basic model, it could mean that the model is not suitable for the data or that the way of doing things needs to be looked at again.

Comparison with Advanced Models

When comparing advanced models to the baseline, developers show with numbers how much better complex algorithms are. This comparison is very important because it helps explain why using more complicated and demanding models is worth it. These advanced models need a lot of computer power and take more time to create and train. By demonstrating their value clearly, developers can prove that these extra efforts bring real benefits.

Understanding Data Patterns

Baseline models are useful for understanding basic data patterns without the extra noise from more advanced models. They usually need fewer variables or have simpler connections, which can make the main trends in the data that might be hidden by complicated models.

Risk Mitigation

Using a baseline model can help lower risks. It means there is always a simple, working solution ready to use. This helps a lot in projects with tight deadlines or situations where the complicated models do not work well as expected. Having this baseline ensures that even if advanced plans fail, there’s at least something available and functional to rely on for the task at hand.

Preventing Overfitting and Overcomplexity

Baseline models help prevent overfitting and overcomplexity in several ways:

- Simplicity for safety: They show us how strong simplicity can be. Often, simpler models work better and are more reliable in different situations.

- Performance comparison: They give an obvious way to see if making a model more complicated is worth it because of a big boost in how well it works.

- Focus on data quality: They suggest giving more attention to good data and how you create and manipulate features instead of using very complicated models. This can help improve the overall performance.

Types of Baseline Models

Random Baseline Models

Random baseline models make predictions by picking randomly from the output variable’s distribution. In classification tasks, this could involve choosing a class at random according to how often each class appears in the data we know about. This kind of model helps create a starting point where the smallest accuracy possible equals random guessing. It sets up a basic level that any other model needs to do better than.

Majority Class Baseline Models

These models always guess the class that appears most often in their training data. For a binary classification task, if 70% of examples are positive, then this majority class baseline model will say “positive” for every single input. This model helps to check for imbalances in a dataset and gives a basic standard for the least performance models that should go beyond, mainly looking at accuracy and F1-score.

Simple Heuristic Baseline Models

Heuristic models apply straightforward rules or methods that rely on domain-specific knowledge or clear patterns noticed in the data. For instance, a heuristic model might conclude that any email containing the word “free” is likely to be spam. These models are usually quick to set up and can offer unexpectedly strong baselines when the heuristics are picked wisely.

Naive Baseline Models

Naive models make assumptions that usually are seen as too simple, but they are easy to use. For example, a naive forecast model for stock prices might always guess that tomorrow’s price will be just like today’s. This type may overlap with heuristic models, but usually is more simple and based on less reasoning.

Time Series Baseline Models (Last Observation Carried Forward)

In time series forecasting, one usual basic model is called the last observation carried forward (LOCF). With this method, predictions for future values are just the same as the latest observed value. This model helps show how much extra benefit might come from using more advanced techniques in analyzing time series data.

Classification Baseline Models (Most Frequent Class)

Similar to most common class models but used specifically in classification cases, these models predict the class that appears most often for every instance. This method is a fast way to set a basic level of performance in classification tasks, especially helpful when dealing with datasets where classes are very imbalanced.

Regression Baseline Models (Mean Value)

In regression tasks, using the mean (average) value from the training set’s target variable as a prediction for all examples is a good starting point. This method helps in creating a basic benchmark to measure how well other models perform by minimum standards like mean squared error or mean absolute error.

Applications of Baseline Models

Time Series Forecasting

Baseline models like the last observation carried forward or historical averages are used a lot in time series analysis. They help to see patterns and seasons in data, giving an easy way to compare with more complicated models such as ARIMA (AutoRegressive Integrated Moving Average) or LSTM (Long Short-Term Memory) networks.

Business and Financial Applications

In the business and finance fields, baseline models are useful for risk evaluation, sales prediction, and financial strategy. For example, looking at past sales numbers to guess future sales can act as a starting point to check how good more complex predicting models are that might include economic signals or consumer feelings studies.

Healthcare and Medical Research

Baseline models in healthcare help predict things like patient outcomes, treatment success rates, or how diseases spread by using simple past data. They are crucial for first evaluations before using more detailed models that may include genetic information, biometric details, or other complex sets of data.

Environmental and Climate Studies

In environmental and climate sciences, baseline models help predict weather trends, effects of climate change, or how quickly resources might run out by looking at old data. These models are very important because they set a standard that can be used to see if new environmental rules or changes are making a difference.

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform