Are you sure you want to delete this access key?

| comments | description | keywords |

|---|---|---|

| true | Learn about the ImageNette dataset and its usage in deep learning model training. Find code snippets for model training and explore ImageNette datatypes. | ImageNette dataset, Ultralytics, YOLO, Image classification, Machine Learning, Deep learning, Training code snippets, CNN, ImageNette160, ImageNette320 |

The ImageNette dataset is a subset of the larger Imagenet dataset, but it only includes 10 easily distinguishable classes. It was created to provide a quicker, easier-to-use version of Imagenet for software development and education.

The ImageNette dataset is split into two subsets:

The ImageNette dataset is widely used for training and evaluating deep learning models in image classification tasks, such as Convolutional Neural Networks (CNNs), and various other machine learning algorithms. The dataset's straightforward format and well-chosen classes make it a handy resource for both beginner and experienced practitioners in the field of machine learning and computer vision.

To train a model on the ImageNette dataset for 100 epochs with a standard image size of 224x224, you can use the following code snippets. For a comprehensive list of available arguments, refer to the model Training page.

!!! Example "Train Example"

=== "Python"

```python

from ultralytics import YOLO

# Load a model

model = YOLO('yolov8n-cls.pt') # load a pretrained model (recommended for training)

# Train the model

results = model.train(data='imagenette', epochs=100, imgsz=224)

```

=== "CLI"

```bash

# Start training from a pretrained *.pt model

yolo detect train data=imagenette model=yolov8n-cls.pt epochs=100 imgsz=224

```

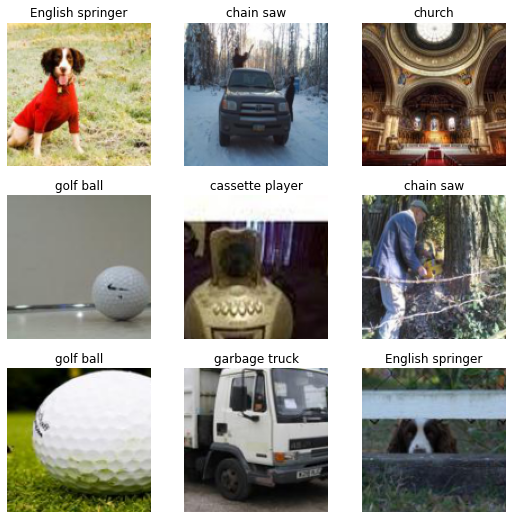

The ImageNette dataset contains colored images of various objects and scenes, providing a diverse dataset for image classification tasks. Here are some examples of images from the dataset:

The example showcases the variety and complexity of the images in the ImageNette dataset, highlighting the importance of a diverse dataset for training robust image classification models.

For faster prototyping and training, the ImageNette dataset is also available in two reduced sizes: ImageNette160 and ImageNette320. These datasets maintain the same classes and structure as the full ImageNette dataset, but the images are resized to a smaller dimension. As such, these versions of the dataset are particularly useful for preliminary model testing, or when computational resources are limited.

To use these datasets, simply replace 'imagenette' with 'imagenette160' or 'imagenette320' in the training command. The following code snippets illustrate this:

!!! Example "Train Example with ImageNette160"

=== "Python"

```python

from ultralytics import YOLO

# Load a model

model = YOLO('yolov8n-cls.pt') # load a pretrained model (recommended for training)

# Train the model with ImageNette160

results = model.train(data='imagenette160', epochs=100, imgsz=160)

```

=== "CLI"

```bash

# Start training from a pretrained *.pt model with ImageNette160

yolo detect train data=imagenette160 model=yolov8n-cls.pt epochs=100 imgsz=160

```

!!! Example "Train Example with ImageNette320"

=== "Python"

```python

from ultralytics import YOLO

# Load a model

model = YOLO('yolov8n-cls.pt') # load a pretrained model (recommended for training)

# Train the model with ImageNette320

results = model.train(data='imagenette320', epochs=100, imgsz=320)

```

=== "CLI"

```bash

# Start training from a pretrained *.pt model with ImageNette320

yolo detect train data=imagenette320 model=yolov8n-cls.pt epochs=100 imgsz=320

```

These smaller versions of the dataset allow for rapid iterations during the development process while still providing valuable and realistic image classification tasks.

If you use the ImageNette dataset in your research or development work, please acknowledge it appropriately. For more information about the ImageNette dataset, visit the ImageNette dataset GitHub page.

Press p or to see the previous file or, n or to see the next file

Browsing data directories saved to S3 is possible with DAGsHub. Let's configure your repository to easily display your data in the context of any commit!

ultralytics is now integrated with AWS S3!

Are you sure you want to delete this access key?

Browsing data directories saved to Google Cloud Storage is possible with DAGsHub. Let's configure your repository to easily display your data in the context of any commit!

ultralytics is now integrated with Google Cloud Storage!

Are you sure you want to delete this access key?

Browsing data directories saved to Azure Cloud Storage is possible with DAGsHub. Let's configure your repository to easily display your data in the context of any commit!

ultralytics is now integrated with Azure Cloud Storage!

Are you sure you want to delete this access key?

Browsing data directories saved to S3 compatible storage is possible with DAGsHub. Let's configure your repository to easily display your data in the context of any commit!

ultralytics is now integrated with your S3 compatible storage!

Are you sure you want to delete this access key?