Artificial Intelligence (AI) systems called Language Models (LMs) or Large Language Models (LLMs) have brought a major change in the field of AI by making machines understand and produce text similar to humans. These models, like GPT-4, Gemini, and Claude, are capable of doing numerous language jobs such as answering queries or composing content. The behavior of LLMs is affected by the setting called temperature which can be adjusted. Now, let’s delve into what temperature means within LLMs, how it impacts their actions, and finally offer useful advice on modifying the temperature for different purposes.

What is LLM Temperature?

Temperature, as seen in the LLMs setting, is a variable that controls the randomness of the model’s production. It directly impacts how the model picks from possible next words when producing text. A small temperature makes output more predictable and concentrated, whereas a larger temperature boosts unpredictability and ingenuity in answers.

The Concept of Temperature in LLMs

The temperature setting, in a sense, functions as a control for balancing precision and variety. The way this works mathematically is by adjusting the probabilities of predicted words: using low temperatures makes the model more assured about its top predictions while higher temperatures flatten out probability distribution, increasing chances of picking less usual words.

When you adjust the temperature to a low value (near zero), it makes the model cautious. It chooses the next word that is most probable based on its training. When you increase the temperature, this encourages more adventurous behavior from our model – sometimes leading to diverse and unpredictable outputs. This balance between making sense and being new is crucial for adjusting how LLMs perform in different tasks.

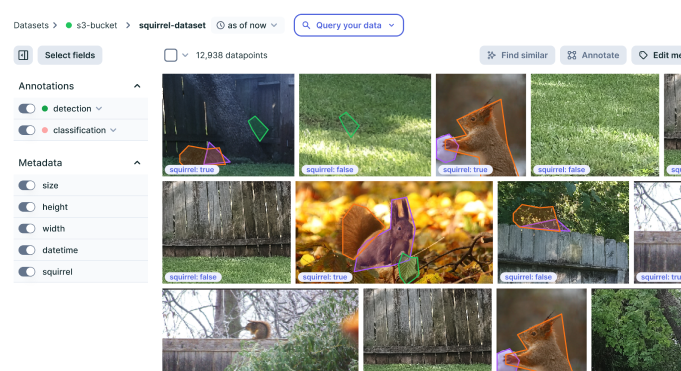

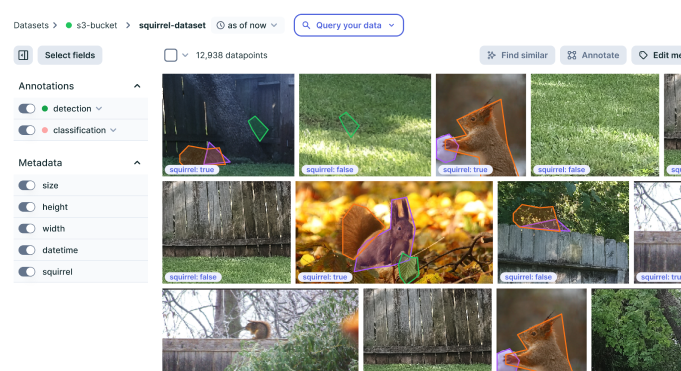

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform

Practical Implications of Temperature Settings

The temperature setting will affect how the LLM responds, and for some use cases like technical or legal writing that need precise and correct information, a lower temperature is better. This helps to keep the output steady without adding any mistakes or unrelated details.

On the opposite side, when you consider tasks that gain from creativity and diverse responses like brainstorming or creative writing, a hotter temperature setting might be beneficial. It motivates the model to examine more possibilities, resulting in outputs that are more innovative and varied.

Temperature adjustment is an important aspect in customizing the behavior of LLMs for various scenarios. For example, if it is a chatbot made for casual talk, then moderate temperature could be utilized to maintain equilibrium between involvement and coherence. On the other hand, a content generator that creates marketing copy might use higher temperature values to come up with more diverse and captivating suggestions.

Best Practices for Choosing Temperature Settings

The right temperature setting for a task or result depends on what you are trying to achieve and when it is needed. You can follow these guidelines:

- Low Temperature (0.1 – 0.4): This range is suitable for tasks that need a high level of accuracy and consistency. Examples include technical writing, formal communications, and data generation where precision plays an important role.

- Moderate Temperature (0.5 – 0.7): This level is good for keeping a balance between coherence and creativity. It fits well with chatbots, customer service apps, and general content creation that requires both reliability and variety in responses.

- High Temperature (0.8 – 1.0+): The high-temperature setting is more suitable for creative tasks where a variety of distinct and non-traditional outputs are advantageous. This is perfect for artistic content, brainstorming, or storytelling purposes.

Examples of Temperature in Action

To illustrate the effects of different temperature settings, let’s consider two examples:

Example 1: Low Temperature Setting (0.2)

Prompt: “Explain the importance of renewable energy.”

Response: “Renewable energy is very important to lower greenhouse gas release, cut down pollution, and keep up with durability. It works against climate change and assures a cleaner world for our kids.”

Example 2: High Temperature Setting (0.9)

Prompt: “Explain the importance of renewable energy.

Response: “Renewable energy is a gift from the sun and wind, they are like endless sources of power that promise us a cleaner place in the future. It’s not only about lessening emissions but also about accepting an equilibrium with our world where both creation and nature live together.”

You can notice the variability in both examples. While response 1 is more straightforward, response 2 has a little blend of creativity.

Applications of Temperature in LLMs

Temperature settings play a vital role in various real-world applications:

- Chatbots and Conversational Agents: In the case of chatbots, you can change temperature according to your desired tone and engagement level. For instance, a customer service bot might be set at lower temperatures to provide clear and concise responses. On the other hand, social chatbots could use higher temperatures to maintain lively conversations that are more engaging.

- Content Generation and Creative Writing: Writers and marketers can use higher temperatures to create more diversity and imagination in content. This could be writing prompts, poetry, or whole articles that profit from a wider spread of ideas and expressions.

- Data Augmentation and Machine Learning Experiments: In machine learning, you can generate artificial data having different temperatures. This method could be beneficial for making diverse datasets, especially when training models that need exposure to various situations and language styles.

Future Trends and Potential Developments

In the future, LLMs may become more advanced and adjust temperature settings in a way that is smarter. This could involve using improved temperature control methods where the model changes its temperature based on context or interaction stage for a better balance between creativity and coherence.

Furthermore, when users become more knowledgeable about LLM temperature settings, you may see the development of advanced tools and interfaces that provide an easy-to-use, intuitive way to control this parameter with accuracy. This will help users in different fields that use LLMs at their best capacities.

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform