In the realm of machine learning and data analysis, a feature vector is a fundamental concept that plays a crucial role in how data is represented and processed by machine learning algorithms. Understanding what a feature vector is and its significance is essential for anyone delving into the field of data science.

What is a Feature Vector?

A feature vector is an n-dimensional vector of numerical features that represent some object. In machine learning, these objects are typically data points that need to be classified, clustered, or otherwise analyzed. Each element of the feature vector corresponds to a specific feature of the data point.

Feature vectors serve as a compact and efficient way to encode data points for processing by machine learning algorithms. By converting raw data into numerical vectors, feature vectors make it possible to apply mathematical and statistical techniques to the data. This representation is crucial because machine learning models require numerical input to function correctly.

Feature vectors are the bridge between raw data and the abstract models used to make predictions, classifications, or recommendations. They encapsulate all the relevant information about the data points in a format that algorithms can easily process.

Role of Feature Vectors in Machine Learning

Feature vectors are pivotal in various machine-learning algorithms for several reasons:

- Input Representation: Machine learning algorithms require numerical input to process data. Feature vectors transform raw data into a numerical format suitable for these algorithms.

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) or t-SNE use feature vectors to reduce the dimensionality of data, making it easier to visualize and analyze it.

- Classification: Algorithms like Support Vector Machines (SVMs) and K-Nearest Neighbors (KNN) use feature vectors to classify data points into different categories.

- Regression: In regression analysis, feature vectors represent the independent variables that are used to predict a dependent variable.

- Clustering: Algorithms like K-Means and DBSCAN use feature vectors to group similar data points together based on their feature values.

- Neural Networks: Feature vectors are the input to neural networks, which learn to map these vectors to outputs through layers of weighted connections.

Feature vectors enable the application of sophisticated machine learning techniques to a wide variety of problems, from predicting customer churn to diagnosing diseases from medical images. By providing a standardized way to represent and process data, feature vectors are at the heart of modern data analysis and machine learning workflows.

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform

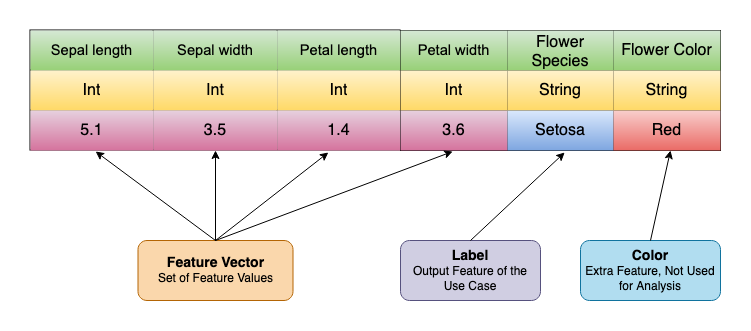

Example of Feature Vectors

In this section, we will explore two examples of feature vectors: a basic example using the well-known Iris dataset and a more complex example involving customer data for a recommendation system.

Basic Example: The Iris Dataset

The Iris dataset is one of the most famous datasets in the field of machine learning. It contains 150 samples of iris flowers from three different species: Iris setosa, Iris versicolor, and Iris virginica. Each sample has four features:

- Sepal length (in cm)

- Sepal width (in cm)

- Petal length (in cm)

- Petal width (in cm)

Each of these features is a numerical value, and they collectively form the feature vector for each sample.

For instance, consider a single data point from the Iris dataset:

- Sepal length: 5.1 cm

- Sepal width: 3.5 cm

- Petal length: 1.4 cm

- Petal width: 0.2 cm

The feature vector for this data point is:

X = [5.1,3.5,1.4,0.2]

This feature vector represents the characteristics of a particular iris flower.

More Complex Example: Customer Data for a Recommendation System

In real-world applications, feature vectors can be much more complex. Consider a recommendation system for an e-commerce platform where each customer can be represented by a feature vector that includes various types of information:

- Numerical Features:

- Age: 29

- Annual income: $50,000

- Categorical Features (one-hot encoded):

- Gender: Male (represented as [0, 1])

- Preferred shopping category: Electronics (encoded categories: [1, 0, 0, 0])

- Binary Features:

- Subscribed to newsletter: Yes (represented as 1)

- Premium Member: No (represented as 0)

- Behavioral Features:

- Number of purchases in the last month: 5

- Average rating of purchased products: 4.2

Combining these features, the feature vector for this customer might look like:

X = [29,50000,0,1,1,0,1,0,0,0,5,4.2]

This vector incorporates various types of data into a single representation, which can then be used by recommendation algorithms to suggest products.

Type of Feature Vectors

There are several types of feature vectors, each designed to handle different kinds of data. Let’s explore numerical, categorical, textual, and image feature vectors.

Numerical Feature Vectors

Numerical feature vectors consist of features that are numerical values. These features can be continuous or discrete, and they represent quantifiable aspects of the data. Examples of numerical features include age, income, temperature, and number of purchases.

Example:

Consider a dataset of houses with features such as square footage, number of bedrooms, and price:

- Square footage: 2000

- Number of bedrooms: 3

- Price: $300,000

The feature vector for a house might look like this:

X = [2000,3,300000]

Numerical feature vectors are straightforward to work with and are compatible with a wide range of machine learning algorithms, including linear regression, k-means clustering, and neural networks.

Categorical Feature Vectors

Categorical feature vectors consist of features that represent categories or groups. These features are typically converted into numerical format through techniques such as one-hot encoding or label encoding. Categorical features are common in datasets involving demographic information, product categories, and more.

Example:

Consider a dataset of customers with features such as gender, preferred shopping category, and membership type:

- Gender: Male (One Hot encoding: 1)

- Preferred shopping category: Electronics (Label Encoding: 2)

- Membership type: Premium (One Hot Encoding: 0)

Using one-hot encoding, the feature vector might look like this:

X = [1,2,0]

Here, gender is encoded as 1 for Male, preferred shopping category (e.g., Clothing, Electronics, Home) is encoded as 2 for electronics, and membership type (e.g., Premium, Basic) is encoded as 0 for premium.

Categorical feature vectors are widely used in algorithms like decision trees, random forests, and gradient boosting.

Textual Feature Vectors

Textual feature vectors are derived from text data. Since text cannot be directly processed by machine learning algorithms, it needs to be converted into numerical form. Common techniques for creating textual feature vectors include Bag of Words (BoW), Term Frequency-Inverse Document Frequency (TF-IDF), and word embeddings like Word2Vec or GloVe.

Example:

Consider a dataset of customer reviews. A review like “Great product and excellent service” can be transformed into a feature vector using TF-IDF:

X = [0.3,0.4,0.5,0.2,…]

Each element of the vector represents the TF-IDF score of a particular word in the vocabulary.

Textual feature vectors are essential in natural language processing (NLP) tasks, including sentiment analysis, text classification, and information retrieval.

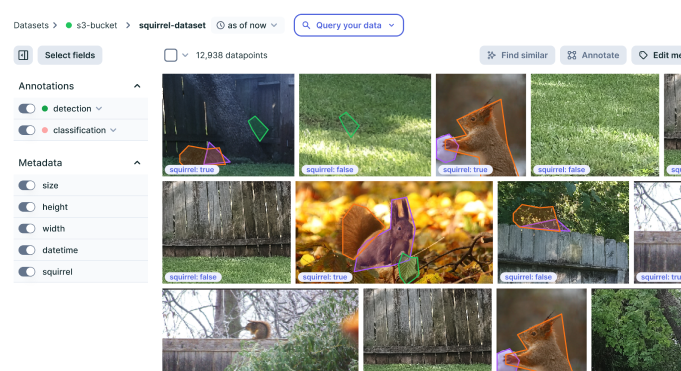

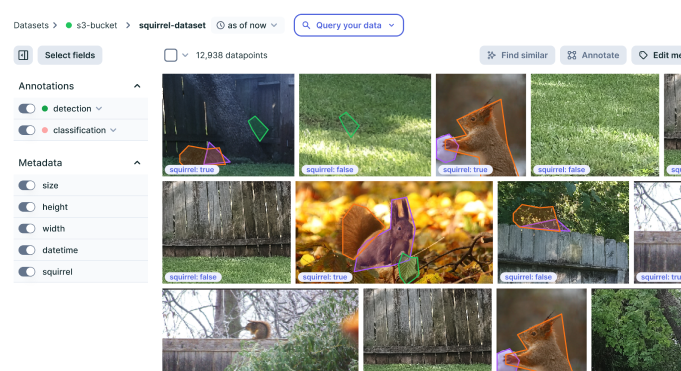

Image Feature Vectors

Image feature vectors are derived from image data. To convert images into numerical form, techniques such as pixel intensity values, histograms of oriented gradients (HOG), or features extracted using convolutional neural networks (CNNs) are used.

Example:

Consider an image dataset of handwritten digits. Each image is a 28×28 pixel grayscale image. The feature vector for an image might include the pixel intensity values:

X = [0,255,128,…,64]

Alternatively, using CNNs, the feature vector might be a high-dimensional representation extracted from one of the network’s layers:

X = [0.1,0.3,0.6,…,0.4]

Image feature vectors are crucial in tasks such as image classification, object detection, and facial recognition.

Applications of Feature Vectors

Feature vectors are a cornerstone of machine learning and data analysis, representing data in a format that algorithms can process. Let’s have a look at some of the popular domains where feature vectors are popularly used.

Machine Learning Models

Feature vectors are essential in building and training machine learning models. They provide a structured and numerical representation of data, enabling algorithms to learn patterns and make predictions. The vectors are popularly used by various regression, classification, and clustering algorithms to make predictions.

Natural Language Processing (NLP)

Feature vectors are crucial in NLP, where they transform text data into numerical form to be processed by algorithms. Text, classification, Named Entity Recognition (NER), and Machine Translation are some of the popular NLP use cases that use feature vectors.

Computer Vision

Feature vectors are very popular in computer vision, where they represent images or parts of images in a numerical format. These feature vectors contribute to various image processing tasks like image classification, object detection, image segmentation, etc.

Recommender Systems

Feature vectors are vital in recommender systems, where they represent users and items to provide personalized recommendations. Popular recommender system algorithms like collaborative filtering, content-based filtering, user profiles, etc. use feature vectors to recommend various products and services to users.

Improve your data

quality for better AI

Easily curate and annotate your vision, audio,

and document data with a single platform